Sunday, March 25, 2018

CQRS Commands, stateless services, and thin clients

Looking at the CQRS architectural pattern, there are interactions that maybe I didn’t fully understand. Like if the target of queries/commands are the Managers from the IDesign methodology, this kinda has a direct implication on the statefulness of the Manager vs the statelessness of the client. So you need a thin client with little state, and commands are routed to the Manager in the backend, and any updates then come back to the thin client as a response object or update message.

Tuesday, March 06, 2018

Growing out of a legacy application

Trying to grow out of a dated legacy application is no small feat, and there is undoubtedly a cottage industry dedicated to consulting on how to do just that.

The most effective pattern I've encountered for this is the Strangler Application, so named from some kind of tree seen down under. But it seems that there are better and worse ways of approaching a Strangler application.

I think Fowler's captures the key aspects: a proper Strangler application needs both a static data strategy ("asset capture") and a dynamic behavior strategy ("event interception"). And it is important that both parts need to be involved in a two-way strategy for getting data and events back and forth. Fowler stresses the strategic importance of this two-way strategy, as it creates flexibility for determining the order of asset capture. Words to live by...

The most effective pattern I've encountered for this is the Strangler Application, so named from some kind of tree seen down under. But it seems that there are better and worse ways of approaching a Strangler application.

I think Fowler's captures the key aspects: a proper Strangler application needs both a static data strategy ("asset capture") and a dynamic behavior strategy ("event interception"). And it is important that both parts need to be involved in a two-way strategy for getting data and events back and forth. Fowler stresses the strategic importance of this two-way strategy, as it creates flexibility for determining the order of asset capture. Words to live by...

Monday, March 05, 2018

<retro> What is Image Quality? </retro>

I saw a talk at AAPM 2015 by Kyle Myers (from the FDA's Office of Science and Engineering Laboratories) about the Channelized Hotelling Observer (Barrett et al 1993), which is a parametric model of visual performance based on spatial frequency selective channels in areas V1 and V2.

She was discussing the use of the CHO model for selecting optimal CBCT reconstruction parameters, but I ran across an obvious application of the technique to the problem of JPEG 2000 parameter selection (Zhang et al 2004).

It reminded me of the RDP plugin prototype I had developed a few years back, which demonstrated the ability to dynamically adjust compression performance during medical image review on an RDP or Citrix session. My prototype could also benefit from optimizing the CHO confidence interval (though it used JPEG XR instead of JPEG 2000), but possibly more interesting is the ability to predict the presentation parameters to be delivered over the channel, such as zoom/pan, window/level, or other radiometric filters to be applied to the image. It could also be used to determine when certain regions of the parameter space are not feasible for image review, because the confidence interval collapses (for instance, if you set window width=1).

So if anyone asks "What is Image Quality", you can tell them: "Image Quality = Maximized CHO Confidence Interval" (or maximized CHO Area Under Curve). Got it?

P.S. there is very nice Matlab implementation of the CHO model in a package called IQModelo, with some examples of calculating CI and AUC.

Friday, March 02, 2018

Better Image Review Workflows Part III: Analysis

The central purpose of reviewing images is to extract meaning from the images and then base a decision on this meaning. Currently the human visual system is the only system that is known to be able to extract all the meaning from a medical image, and even then it may need some help.

As algorithms become better at meaning extraction, its important to keep in mind that anything an algorithm can make of an image should, in theory, be able to also be seen by a human, even if the human needs a little help. This principle can unify the problem of optimal presentation and computer-assisted interpretation, as it provides a method of tuning an algorithm so as to optimize presentation. I went in an little more detail when I wrote about the FDA's Image Quality research.

As algorithms become better at meaning extraction, its important to keep in mind that anything an algorithm can make of an image should, in theory, be able to also be seen by a human, even if the human needs a little help. This principle can unify the problem of optimal presentation and computer-assisted interpretation, as it provides a method of tuning an algorithm so as to optimize presentation. I went in an little more detail when I wrote about the FDA's Image Quality research.

Capsules and Cortical Columns

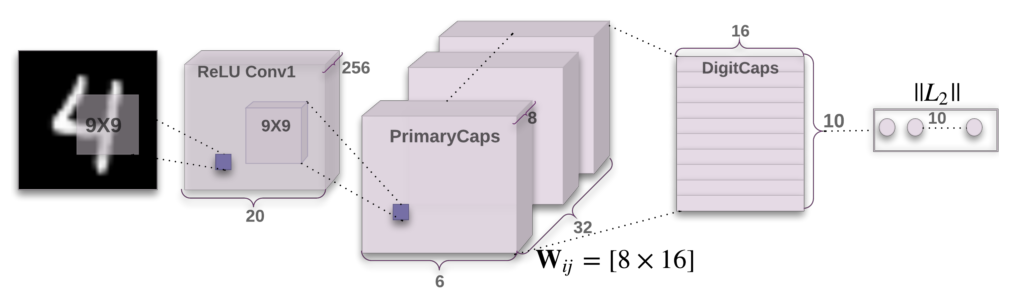

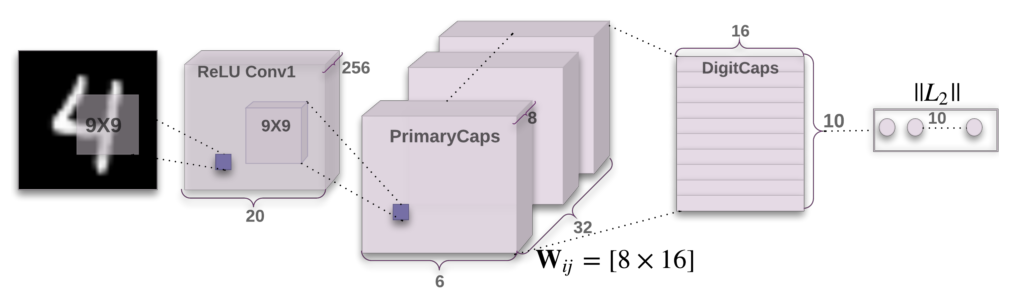

I'm still trying to figure out what to make of the Capsule routing mechanism proposed by Sabour et al late last year. It is potentially a move toward greater biological plausibility, if the claim is that each Capsule corresponds to a cortical column. If the Capsule network does reflect what happens in the visual system, its routing algorithm would need to either develop or learned.

My point of reference here is the Shifter circuit (Olshausen 1993), which never explained how its control weights would have been "learned", despite other biologically plausible characteristics. I've always understood that learning control weights is more difficult than learning filter taps for a convolutional network, or RBM weights. I guess that's why reinforcement learning is so much harder to get right than CNN learning. But I never really thought the Shifter control weights would be learned anyway--I expected they would be the result of developmental processes, just as topographic mappings come from development.

The paper on training the Capsule network doesn't mention consideration of an explicit topographic constraint (like in the Topographic ICA). I wonder how difficult it would be to use a dynamic Self-Organizing Map as a means of organizing the units in the PrimaryCaps layer? Would this simplify the dynamic routing algorithm?

LoFi Ascii Pixel

I'm looking at some 'lofi' image processing in F#, and thought I would begin by publishing as gists (because its very light weight). I started with a lofi ascii pixel output, so you can roughly visualize an image after you generate it. I will include some test distributions (gabor, non-cartesian stimuli) in a subsequent post.

Monday, January 29, 2018

Teramedica Zero-Footprint Viewer

- Server-side rendering is probably the easier option for an organization with limited technical resources. The delays are noticeable in the video, though I guess for an initial "Enterprise Imaging" offering it is probably good enough.

- Web-browser based review is also key to zero footprint viewing

- One could argue that installing an app doesn't really count as "zero footprint", I guess it does minimize IT resources for deployment

- Ability to deploy on a luminance-controlled monitor (i.e. in a radiology reading room) is probably also essential--I'm not sure Teramedica has that covered yet

- How would this compare to a fat client running on a Citrix farm? Probably somewhat better performance (due to lossy compression, which I guess radiologists are OK with?) but the Citrix farm's scalability would likely not be favorable, especially if OpenGL volume textures were being used for server-side rendering

Subscribe to:

Posts (Atom)

-

One of the problems to be addressed for adaptive treatment paradigms is the need to visualize and interact with deformable vector fields (...

-

Dicom syntax can be encoded in a songle kaitai yaml

-

Looking at the CQRS architectural pattern, there are interactions that maybe I didn’t fully understand. Like if the target of queries/comma...